How to Mix Using Multiple Reference Monitors

And not drive yourself crazy

When I first started mixing, it sometimes felt like I was redoing my work over and over until I hit my deadline and was forced to stop. My mix process back then was mixing through my main speakers (full-range) then switching to small speakers for a pass. Then, I’d switch back to my main speakers and find a totally different set of problems. I’d do a pass-through a third set of speakers, and it’d open up another can of worms.

It was very hard to trust my mix decisions. I didn’t trust the rooms I was working in. I didn’t trust my speakers. I sometimes questioned my ears or ability. When there’s that much doubt how are you ever able to make a decision? You can’t. Constantly questioning what is “right” slows down the mix process severely.

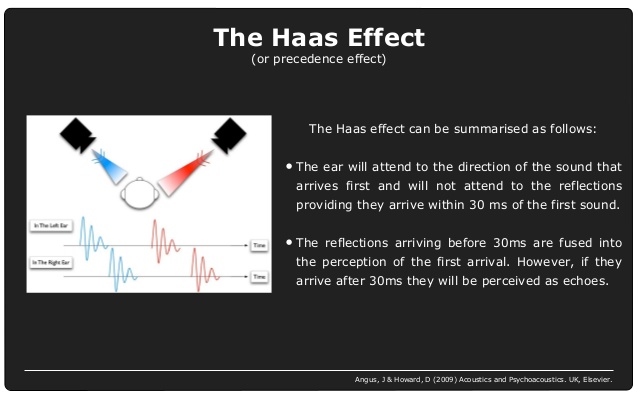

From a mixing perspective, nearly every room is flawed in some way. There’s room resonances, bass management issues, less than ideal speaker placement, noise, reflections, or phase issues. Even a room that’s tuned by a great acoustician and considered flat can have 6dB variance or more! The only way to trust a room (or monitors) is to accept a room for what it is.

First and foremost, it helps to reduce as many changing variables as possible. Mix as much as you can in the same room using one set of references monitors. Think of it as your “home base.” The goal is to have a setup that you trust – not because it sounds amazing but because you know its quirks and flaws and strengths.

As you mix, make a mental note of things you notice, like, what frequencies are you always EQing? When you pan, is the imaging clear or muddy? Critical listening is about observation without judgment. Once you make judgments (especially that a mix sounds better or worse depending on the environment, plugin, etc.) it can turn into a psychological game. This is when you start questioning your speakers, room, and yourself.

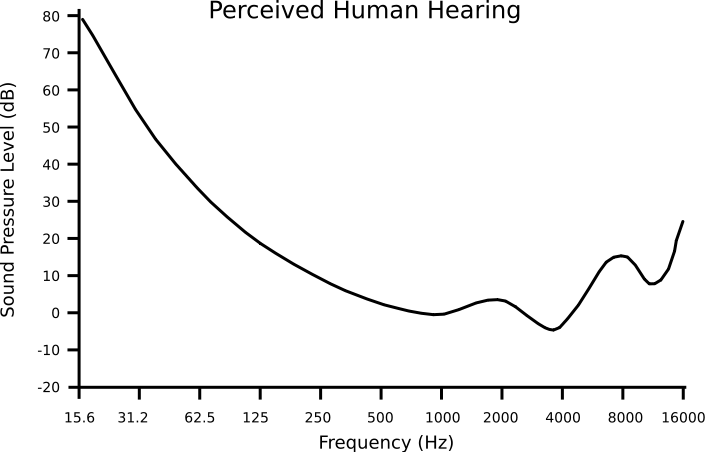

Some of the best advice I’ve ever received about mixing is “mix, however, makes you comfortable.” Auratones speakers (a standard found in many post-production mix rooms) make my ears ring, so I don’t use them. If I mix through a television set, I listen at the same level I listen to tv at home. I quit mixing full-range at 82 dB (which I find uncomfortably loud sometimes) and closer to 78 dB or even lower on occasion. What I gain in confidence by listening at a comfortable level far outweighs what I lose sonically (by not mixing at the nominal calibrated level for a mix room).

Working in different rooms and monitoring situations can be used to your advantage. When I’m working on a film, I sometimes prefer to edit on headphones (especially to treat pops, clicks, unwanted noises). I like to do my detail EQ work and noise reduction in a room with near-field monitors (like a home studio). This allows you to hear detail that might be lost working in a theatrical mix stage. If I can work on a theatrical stage, that’s the best place to deal with bass management (like mixing to the subwoofer) and mixing in 5.1.

In post-production, we don’t just change monitors, but we sometimes change rooms completely. On top of it, the final mix might be going to a movie theater, television (Bluray, Video on Demand), and eventually online (to laptop or cell phone listeners). We’ve got 5.1 and stereo to consider (or even deeper into 3D Immersive Audio). Many projects don’t have the budget to do separate mixes so sometimes you have to make decisions that are good for one listening environment and bad for another. I find as a mixer I’m happier if I do one mix that I am really happy with versus trying to find a middle ground. I tend to cater to the audience that will have the most views.

It’s good to ask yourself, “what am I trying to achieve by changing monitors?” I don’t change monitors anymore unless there’s a specific reason, such as:

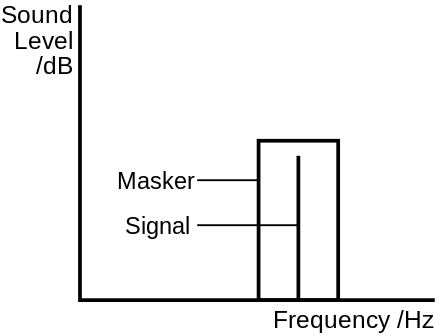

- Checking if a low-frequency instrument or sound effect disappears on small speakers

- Checking if a high-frequency instrument sticks out too much on small speakers

- Getting a second opinion. If I’m not sure I like the balance of something I might change speakers just to get some perspective on it. But I still treat the mains as my “master” mix

There’s definitely value in changing how you listen. I change my listening level a lot when I’m mixing film scores to hear how the mix sounds in context against dialog. If I’m mixing in 5.1, I might switch to the stereo to see how something I’ve mixed translates that way. I might listen through a tv or my phone if there’s a specific question or need for it.

A big part of learning to mix well is learning how to mix poorly, too. How often do you go back to an old mix and think, “that really sucked!” but at the time you thought it was great? We do what sounds “right” until we find something new that sounds right. There are times you have to accept that your mix is the best you’re going to do that day. Tomorrow is a new day, a new mix, and a chance to do something different

On tour with Brittany Kiefer

On tour with Brittany Kiefer View from the Top: Maureen Droney, The Recording Academy

View from the Top: Maureen Droney, The Recording Academy

The

The