Keeping it Real Section 3 – Mixing IEMS in 3D

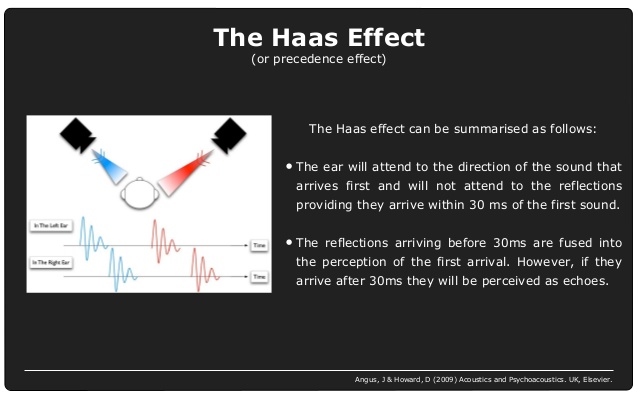

Until now, the physical constraints of IEMs – sound being delivered direct to our eardrums – has given us no way to experience the nuances of sound localisation. The fact that our moulds are in the ear means that we miss out on the out-of-body arrival of sounds and the information we glean from the travel of those sound waves around our heads and bodies.

Until now.

I recently had the pleasure of road-testing a stunning 3D in-ear monitoring system from German company Klang. My experience has convinced me that this is the next great leap forward for in-ears, almost as much of a game-changer as the 1990s introduction of IEMs in the first place, or the evolution from analogue to digital desks.

Think of a standard, high-quality stereo in-ear mix. You perceive the mix elements panned in varying degrees from dead centre all the way out to the peripheries of your ears. Maybe you’ve created some sense of depth with the different levels and EQ of those elements, maybe some atmosphere with reverbs, but that’s about as much as you can do.

Now imagine that you could take your ear moulds out and hear all of those elements placed around you acoustically in three dimensions. The relative volumes are the same, but all of a sudden there’s a sense of space and freedom as you liberate yourself from cramming all of those mix elements into the limited confines of the space between your ears. The detail in the sound of each instrument suddenly becomes a high-definition experience as inputs in similar frequency ranges no longer battle for space; some sounds feel as though they’re high in the air; others close to the ground; some are behind you; whilst others are at distances far beyond your arm’s reach.

That’s what it feels like to switch from a stereo mix to Klang 3D.

(Incidentally, going back the other way feels a bit like flying business and then returning to economy. Honestly, these guys have ruined stereo for me for life!)

Klang has used vast amounts of binaural hearing data to emulate what happens at a listener’s ear when the source is coming from outside the body. This data, gathered in lengthy experiments involving dummy heads with tiny microphones placed at the entrance to the ear to ‘hear’ sounds from different places, has enabled them to create an incredibly realistic 3D experience for in-ear monitoring. It is like virtual reality for the ears, but it’s more than that – it’s an ideal-world natural stage sound.

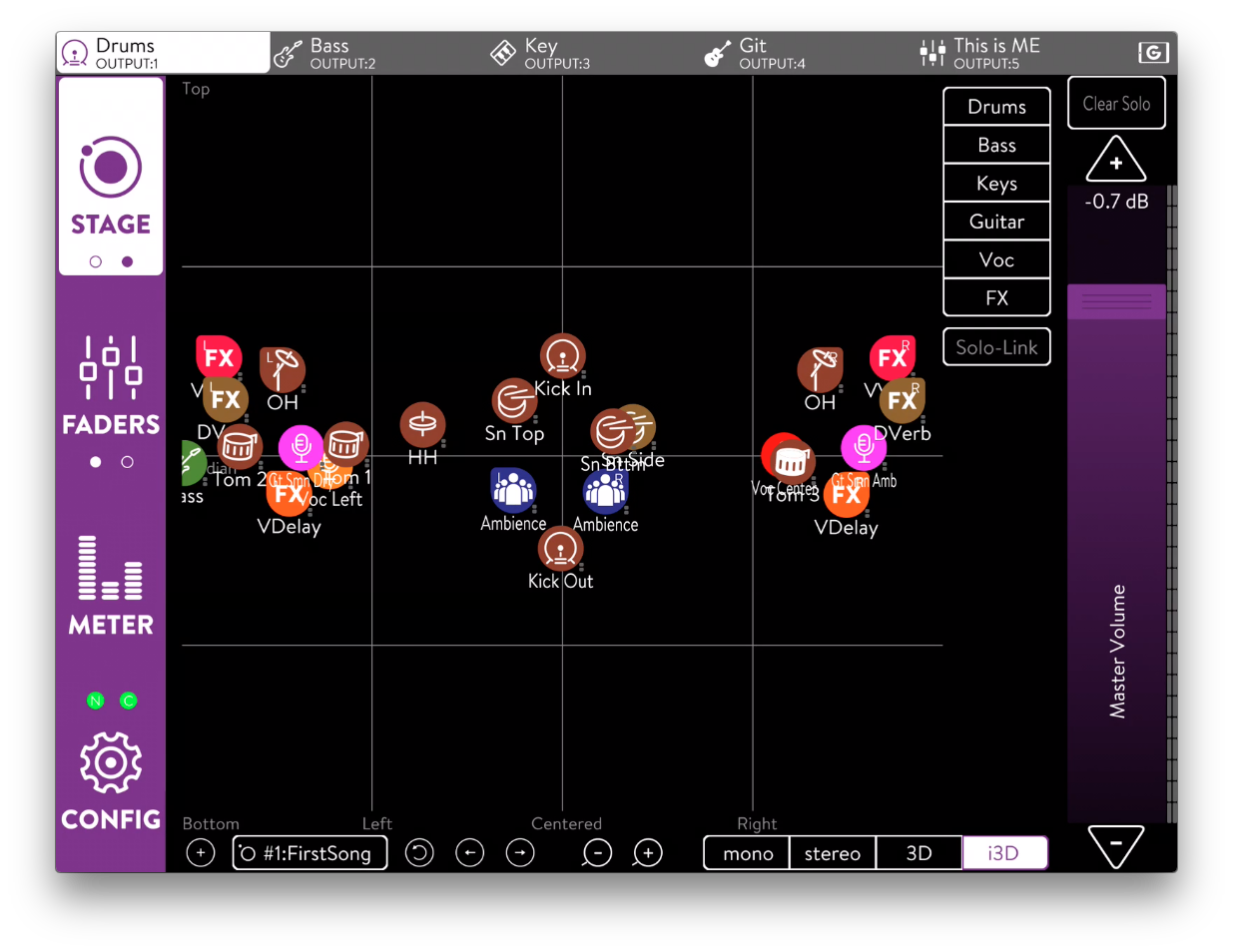

The Klang model combines all that we know about the nature of sound localisation – inter-aural time differences, inter-aural level differences, comb-filtering – with the subtle changes that we experience in frequency perception according to a sound’s location, to allow the monitor engineer to ‘place’ different inputs in various areas around the listener’s head in a 3D spectrum. The incredibly user-friendly interface depicts (on a laptop or more easily still, the touch-screen of an iPad) two different views of the listener’s environment: a bird’s eye view of the top of the head, where instruments appear to be on a virtual ring around your head, allowing you to place them not only to the left and the right but also in front and behind your head; and a landscape view which allows you to move them vertically – above and below your head.

As you move inputs around using the touch screen, you feel as though they are indeed coming from a different three-dimensional location, due to the way the Klang unit subtly alters the sound using binaural hearing data.

As you move inputs around using the touch screen, you feel as though they are indeed coming from a different three-dimensional location, due to the way the Klang unit subtly alters the sound using binaural hearing data.

So with all this newfound space, you can now place instruments wherever you like. While it seems obvious at first to place instruments on the orbit where you actually see them on stage, this is only one possible placement method.

Our brains determine the importance of a sound according to where it is coming from. Right in front of you, and elevated slightly higher than your own head, is perceived as of paramount importance, so it makes sense to put the listener’s own instrument and/or vocal here. Interestingly, I found that a critical sound positioned here didn’t require as much volume as the same sound centre-panned in a stereo mix – making it great news for anyone who requires some elements very loud, such as a drummer and their click.

We perceive sounds from slightly behind us with a wide left/right span as being less important, but still worth paying attention to; so for a singer I found this a good place to put keys and synth sounds, as well as a stereo electric guitar. Strings worked really well placed high and wide for an airy, slightly ethereal feel; and bass and kick felt good placed lower and directly behind me. Pitching information signals such as backing vocals and piano seemed most natural and effective placed evenly panned to the front, but narrower and lower than the strings.

The Klang Fabrik takes up to 56 inputs, and it was interesting to note that I could be even more flexible with my mixing by leaving some inputs (such as talk mics, which call for no special artistic treatment) out of the Klang domain. I simply brought the Klang outputs back into my console where I subbed them into an aux buss, to which I then added the talk mics and anything I didn’t need in the 3D arena. This retained all of the fantastic space and detail of the 3D mix, whilst allowing total freedom in the number of utility inputs.

The Klang app is free to download and comes with a demo track – all you have to do is plug your in-ears or headphones in and you can move the track inputs around and experience 3D sound for yourself. I highly recommend starting by listening in stereo (the app gives you the choice) and then switching to 3D for an A/B test – the difference really is astounding, akin to throwing open the shutters in a dark room!

I’m extremely excited to be taking a Klang system out on my next tour, and I know that the artist and band are going to be delighted by the whole new in-ear experience that this offers. The detail, space and musicality that it offers, make for a truly transformative mix. The only drawback is that they, too, will find themselves ruined for stereo for life!

Interview with Kelly Kramarik on How to Get Started

Interview with Kelly Kramarik on How to Get Started